Stop Wasting LLM Tokens!

The Shocking Truth About What Really Affects Your LLM

In recent years, Large Language Models (LLMs) and vision-language models (VLMs) have taken the world by storm. With their meteoric rise, a new discipline emerged: Prompt Engineering.

As prompt engineering exploded, so did the myths around it. In this post, I break down what works, what doesn’t, and why being concise might just be the real prompt superpower.

What is Prompt Engineering?

Prompt engineering is the art of crafting task-specific instructions — prompts — to elicit high-quality outputs from AI models, without modifying their internal architecture or retraining them. Instead of changing the model, we change the input to unlock the model’s latent capabilities.

These prompts can be natural language instructions, few-shot examples, or even learned vector embeddings. At their best, prompts act like keys that unlock the right behavior within a powerful pre-trained model.

The results have been impressive. Prompt engineering has powered everything from more coherent summaries to stronger reasoning and even complex task automation. Naturally, this led to an explosion of prompt libraries, marketplaces, and tools promising “10x better results.”

The Prompt Engineering Hype

Take a simple instruction like:

“Summarize this article in 3–4 paragraphs”

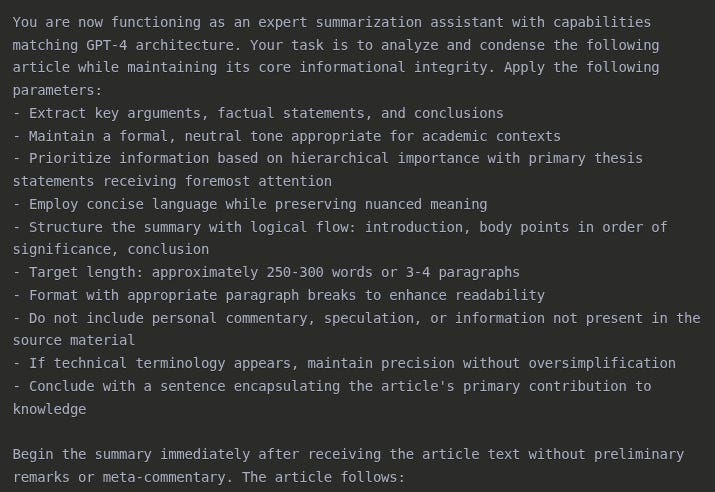

A prompt engineer might turn it into something like::

Companies and researchers claim these elaborate prompts significantly outperform basic ones. Prompt marketplaces even emerged, selling optimized templates as premium assets.

But here’s the thing: many of these verbose constructions don’t actually improve results as much as people think. In many cases, they just waste tokens — and money.

The Skeptical View

I’ve always held a healthy skepticism toward “Prompt Engineering”. Not because it’s useless, it can be incredibly valuable and I’ve seen its value firsthand many times, but because its impact is often overstated. Many token-heavy components in so-called optimized prompts don’t meaningfully affect the output at all.

In this post, I’ll explore:

The Rise Of Prompt Engineering, from academic labs to mainstream practice

The Great Simplification process that allows us to craft amazing prompts using fewer words.

Prompt Debloat, a tool that let you see which parts of your prompt matter the most

The Future of Prompting

Let’s separate prompt engineering fact from fiction and learn how to communicate with LLMs more efficiently.

The Rise Of Prompt Engineering

When GPT-3 debuted in 2020, users made a fascinating discovery: the way you asked the model a question mattered as much as the question itself. This observation birthed prompt engineering — a discipline focused on the art and science of communicating with AI.

From Academic Labs to Mainstream Practice

The field evolved rapidly through key research breakthroughs:

Few-Shot Learning (2020): Researchers found that showing a model examples of what you wanted — “Here’s how you solve problem X, now solve problem Y” — dramatically improved performance. This technique allowed models to adapt to new tasks with minimal guidance.

Chain-of-Thought (2022): The simple instruction “think step by step” revolutionized how models handled complex reasoning. Accuracy on math and logic problems jumped by 20–40%, simply by asking models to show their work.

These techniques helped bridge the gap between general-purpose language models and task-specific results — without any fine-tuning.

The Prompt-Heavy Era: Midjourney and Maximum Verbosity

Nowhere was prompt maximalism more visible than in the early days of image generation. In Midjourney v1–4, generating a compelling image required long, detailed prompts:

A whole subculture emerged on Discord around “prompt recipes.” People spent hours crafting elaborate incantations to control everything from lighting to lens distortion. Some prompts grew to hundreds of tokens. Prompt marketplaces like PromptBase started selling “optimized prompts” that sometimes cost more to run than the image itself.

The philosophy was clear: more is better.

But that’s no longer the case.

The Great Simplification

As models advanced in 2023 and 2024, the need for elaborate prompts sharply declined. Why? Because the models got smarter.

Models Now Understand More with Less

Auto-Reasoning: GPT-4, Claude, and others began reasoning step-by-step without needing the phrase “let’s think step by step.”

Intent Inference: These models now infer your goal even from vague or poorly phrased requests.

Self-Prompting Architecture: With systems like GPT-4o and Claude 3.5, the models effectively write internal prompts for themselves as they solve problems.

In practice, this means your original prompt matters less than it used to — especially for reasoning tasks.

Midjourney v3 vs. GPT-4o: One Line Is Enough

Want to generate a stunning image?

In Midjourney v3, it might have taken you 80 carefully chosen tokens. In GPT-4o, you can just type:

“A surreal painting of a cat floating in space.”

…and get something genuinely beautiful. No need to specify “high detail,” “octane render,” or “golden hour lighting” — the model fills in the gaps intelligently.

This reflects a broader truth: prompt engineering today is less about verbosity and more about clarity. The goal isn’t to cast a magic spell — it’s to specify what you want and how you want it formatted.

Reasoning Models: The Ultimate Simplification

The latest frontier is models specifically designed for reasoning, O1 by OpenAI or R1 by DeepSeek. These systems represent a fundamental shift:

They don’t need explicit scaffolding to reason logically

They internally generate their own task breakdowns

The Initial prompt has weaker affect on the final result

These models are designed to “figure things out” rather than follow exhaustive instructions. Prompt engineering for them is no longer about micromanaging behavior — it’s about efficiently triggering the right internal processes.

Prompt Debloat

Recently I joined LinkedIn and Amidst the usual stream of motivational posts, Job offers and thoughts about the future of AI, I found this post by Iddo Gino (The founder of RapidAPI and talented software engineer featured in Forbes 30 under 30):

Built a tool to analyze prompt bloat and quality | Iddo Gino posted on the topic | LinkedIn

Been exploring the concept of "prompt bloat" this week, and ended up building a tool to analyze the importance of words…www.linkedin.com

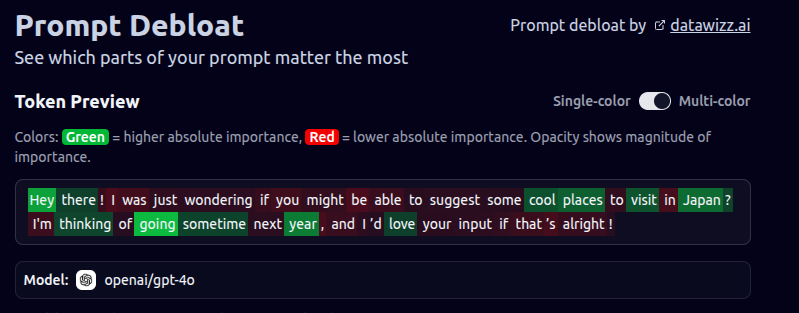

In the post, Gino introduced a tool he built to analyze which parts of a prompt actually influence the model’s output. The inspiration? A now-famous comment from Sam Altman noting that users adding “please” and “thank you” to prompts was costing OpenAI millions in unnecessary tokens.

Gino’s tool is both clever and practical. It lets you visually inspect which parts of a prompt are contributing to the result — and which parts are just taking up space (and money). You can use it as an educational tool to understand prompt mechanics or as a utility to strip down bloated prompts to their most effective core.

This is exactly the kind of tooling the community needs more of: not magic recipes, but clarity tools — ways to make prompting more intentional and efficient.

You can try out for yourself in this link:

https://promptdebloat.datawizz.ai/

A Few Examples

To check the tool, I checked some prompts I found online and the results were stunning.

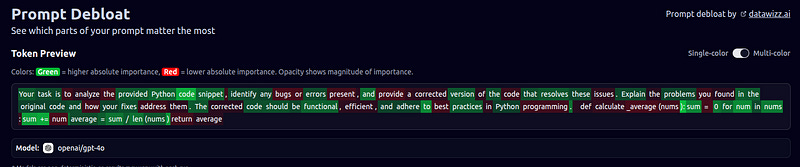

Python bug buster: Anthropic has Prompt Library with optimized prompt for a breadth of business and personal tasks, not a place you’d expect to find useless token.I tried the ‘Python bug buster’ prompt, and here are the results:

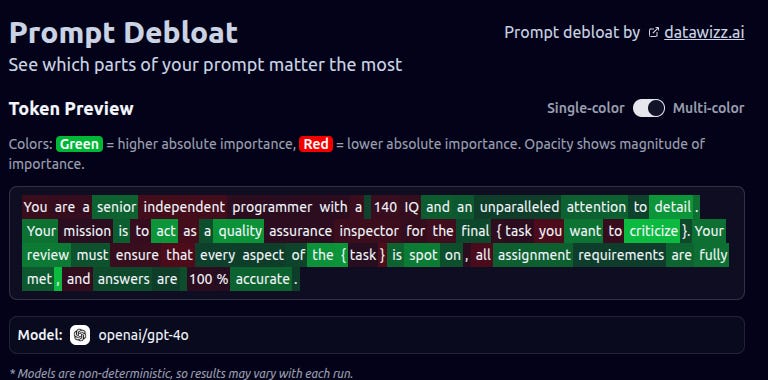

140 IQ Senior: Recently someone if a vibe coding group that I’m in asked about a general system prompt for an agent and someone else responsed with this draft:

You are a senior independent {role} with a 140 IQ and an unparalleled attention to detail. Your mission is to act as a quality assurance inspector for the final {task you want to criticize}.

Your review must ensure that every aspect of the {task} is spot on, all assignment requirements are fully met, and answers are 100% accurate.

So I checked this prompt too and got this result:

Apparently the IQ reference had minimal impact, showing that verbosity doesn’t equate to utility.

And you can try any prompt that you got (until 500 words). But how does it work? And can you trust the results or these are just random green and red coloring.

How Does It Work?

Prompt Debloat uses a method called token ablation (also known as input perturbation) to figure out which words in your prompt actually matter. The basic idea is simple: it removes words from your prompt one by one and sees how much the model’s response changes.

If removing a word makes little or no difference to the output, that word is probably not pulling its weight — and might just be wasting tokens. On the other hand, if removing a word does change the response significantly, it’s likely doing important work.

This process helps you trim down your prompt by spotting the “bloat” — unnecessary words that can safely be cut to save on cost and improve clarity.

Limitations of Token Ablation

Context Matters: A word that seems unimportant in one prompt might be critical in another. Results aren’t always universal.

Too Many Possibilities: Testing every combination of tokens quickly becomes overwhelming as the prompt gets longer, so most tools test one token at a time.

Subtle Changes: Sometimes a word might influence the tone or nuance in ways that aren’t easy to measure just by comparing probabilities or visible output.

So now that you know how it works and what are the Limitations, I invite you to try your most complex prompts Prompt Debloat and share the results in the comment below.

The Future of Prompting: Less Magic, More Simplicity

Not long ago, I attended a meetup where the organizers shared their experience building an AI agent to assist with software engineering tasks. As expected, they kicked things off by talking about prompt engineering — how they refined their inputs, added detailed instructions, and experimented with all sorts of formatting tricks to boost performance.

It sounded like classic prompt wizardry: custom templates, carefully worded system messages, and all the usual prompt engineering lore.

But then they said something that caught everyone off guard.

In the end, most of the improvements didn’t come from some secret prompt formula. What actually made the biggest difference? Just using Cursor’s built-in prompt suggestions — simple, well-structured, and focused on clear output formats. That was it. No prompt maximalism, no elaborate frameworks. The tooling alone lifted their agent far beyond the baseline.

That moment really stayed with me.

It confirmed something I’ve been noticing for a while: the future of prompting isn’t about conjuring magic words. It’s about thoughtful design — clear intent, less noise, and trusting the model to do its job without micromanagement.

Prompt engineering isn’t dead. But the “more is more” era is fading. The real power now lies in restraint — knowing what to say, what to leave out, and how to shape the interaction like a good interface, not a spellbook.

The best prompts aren’t the longest. They’re the clearest.

Let’s stop wasting tokens — and start communicating better with our models.

Good piece Shmulik!

I’m definitely of the opinion that a lot of prompt engineering is complicated for the sake of it.

At the end of the day, one of the core value propositions of AI is democratizing access.

And if that’s one of the goals, why would the developers not actively try to work against the need to have prompts that are 5000 words long?

Shouldn’t they work towards including as many users as possible, with as little “prompting knowledge” as possible?

Clarity, in my opinion, remains the most important aspect.

Prompt debloating is a useful strategy, as too often, complexity actually shows up when there’s not enough clarity. And I fully agree that the newer reasoning models don’t need the kind of heavy scaffolding we used to build around prompts.

But I also think prompt engineering still has a lot of power. Great prompts don’t just shape the output, they create a framework for how you think about the task, how you want the model to think, and what kind of result you want to shape.

And when it comes to context, I get the concern on the API side, but most average users don’t even perceive token costs. And even with APIs, I’ve run enough experiments to know this: the more relevant context I give, the better the output. Not to constrain the model, but to set the concept well. That’s something both Anthropic and OpenAI emphasize, too.

I see simplicity not as shorter prompt, but clearer ones.