Demystifying Coding Agents

Simple Concepts Can Take You a Long Way

The transition from “Chat” to “Agent” in software development is often framed as a mystical leap in artificial intelligence. However, from a systems engineering perspective, the shift is actually a result of standardizing the interface between three specific components: The Reasoning Engine, External Tooling, and Context Management.

Whether you are using Cursor, Windsurf, Claude Code, or a custom open-source setup, the underlying architecture follows a repeatable pattern that manages state and execution over a stateless core.

The secret? The advancements in coding agents today aren’t about “magic”, they come down to these very simple concepts working in unison.

1. The LLM: The Reasoning Engine

At the center of any agent is the Large Language Model. In 2026, we’ve moved past the “autocomplete” era. The leap from GPT-4 to the current generation (Claude 4.5, GPT-5) wasn’t just about parameters, it was the shift toward Native Reasoning.

Models are now trained specifically to utilize larger context windows (2M+ tokens) without losing the “needle in the haystack,” and they are fine-tuned on synthetic “Chain of Thought” data.

This allows the LLM to act as a CPU with a massive, high-fidelity RAM. It doesn’t just predict the next token, it simulates the logic of the code before typing it.

The Planning Loop: Before writing a single line of code, a robust agent executes a “Plan → Critique → Act” cycle. It writes a plan, checks if that plan breaks anything, and then executes.

The Gateway (API Standardization)

One of the silent drivers of the agent explosion is the standardization of the interface between the “Brain” and the machine.

The Great Equalizer: Libraries like LiteLLM and standards like OpenAI’s structured outputs mean you can swap a local Llama-3 model for Claude 4.5 Opus with a single line of config. This “pluggability” allows agents to remain model-agnostic.

The Power Play: Conversely, the “Big Three” (Anthropic, OpenAI, Google) often bake specialized headers into their APIs specifically for tool-calling. If you’re a big enough provider, you don’t follow the interface — you are the interface, forcing Agent frameworks to write custom logic just to squeeze out that extra 5% of reliability.

2. Tools & Instructions: The Execution Layer

f the LLM is the Navigator, the Tools are the Driver. An LLM on its own can only talk, Tools give it “hands.” The leap we’ve seen recently is about the orchestration of these hands through a specific set of instructions.

Tools

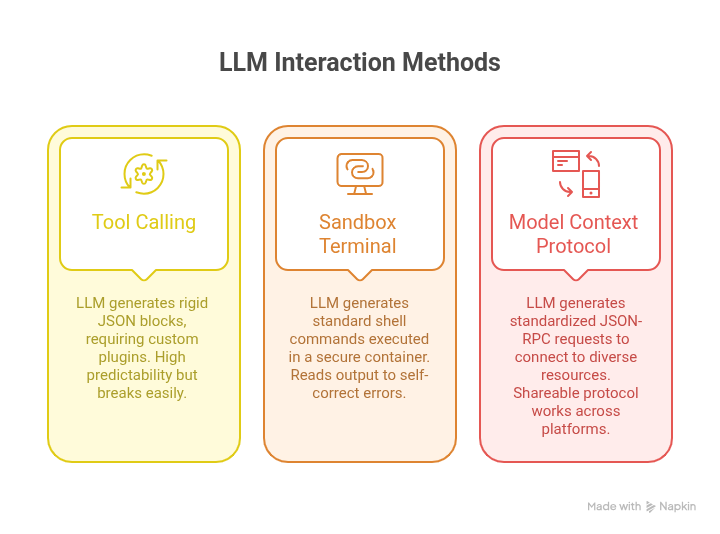

Early tool calling was clunky, relying on rigid JSON blocks that often broke. Today, we use flexible, standardized execution environments:

Tool Calling: Coding agents are proprietary tools for management and file editing. Tools like

apply_difforundo_rewriteallow for surgical changes to code and toolstodo_listto keep track of progress.

Each coding agent uses completely different tools that defines big part of it’s DNA.Sandboxed Terminal Execution: Modern agents have direct access to a pseudo-terminal (PTY). The LLM generates standard shell commands (

grep,find,pytest,sed) that run in a secure, isolated sandbox. By capturingstdoutandstderr, the agent can “see” a compiler error or a failing test and self-correct, closing the loop between thinking and doing.Model Context Protocol (MCP): MCP is the open standard that connects AI assistants to systems. It decouples the tool logic from the agent UI. It allows a local or remote server to expose its resources, such as a database schema or a Jira board, via a unified JSON-RPC protocol.

The agent doesn’t need a custom plugin for every service, it only needs to speak MCP, and the server handles the rest.

Instructions

This is the “Secret Sauce.” It’s why Cursor, Windsurf, and Claude Code can all use the same Claude 4.5 Sonnet model but product completely different results.

The System Prompt is a massive, invisible set of instructions that acts as the agent’s “Operating Manual.” It tells the model how to use its utility belt:

“Before you edit a file, you must search the codebase for related symbols. After every shell command, analyze the output for hidden warnings.”

The difference in how these prompts are written, some prioritizing speed, others safety and testing, is what defines the product’s DNA. One agent feels like a cautious Senior Architect, while another feels like a rapid-fire Prototyper, all based on the orchestration of the same tools.

3. Context Management & Memory: The State Machine

Since the LLM is a stateless engine, the Agent framework must maintain a stateful environment. This is the “Operating System” of the agent, and it’s where the heaviest engineering complexity lies.

The Context Window Paradox

A few years ago, we struggled with 8k token windows. In 2026, we have models with 2M+ tokens. However, a bigger bucket doesn’t automatically mean better results. The paradox is that as the window grows, our expectations grow faster. We no longer ask for a single snippet, we expect agents to refactor entire modules, maintain architectural consistency across microservices, and debug complex integration errors.

This “mission creep” means that even with millions of tokens, context remains the most precious currency in the system. More noise increases the Needle in a Haystack risk. The more ‘fluff’ you add to support these massive tasks, the more likely the LLM is to miss the one critical line of code that matters.

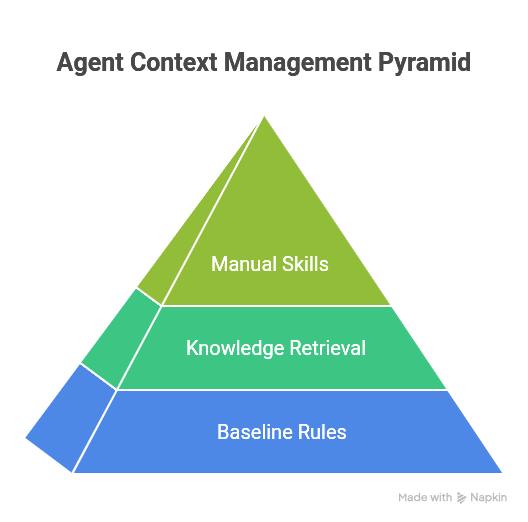

To manage this, we use Context Caching to keep the codebase “hot” and affordable in GPU memory, and we structure the “State Machine” into three distinct layers:

A. The Baseline (Static Rules)

This is the “BIOS” or system configuration, rules that are always true.

Global Rules: Project-wide constraints (e.g.,

.cursorrules, .instructions.md, .windsurfrules) like “Never use external CSS libraries.”Spatial Context: Directory-specific rules (e.g.,

AGENTS.md). The agent only loads the “map” for its current folder, keeping the context window lean and focused on the immediate task.

B. The Knowledge (On-Demand Retrieval)

This layer fetches information only when the agent realizes it doesn’t know something.

Codebase RAG: Using Vector Search (for concepts) and Code Graphs (for definitions) to pluck specific snippets. It acts as the agent’s “Library.”

Long-Term Memory: Systems like Windsurf’s Cascade or Copilot index your past PRs and corrections. This creates a “Personal Profile” so the agent learns your specific habits over time.

C. The Manuals (Just-in-Time Skills)

Following the Anthropic Agent Skills (agentskills.io) standard, “Skills” are dormant manuals.

JIT Loading: You might have 500 specialized skills (e.g., “AWS Deployment,” “Stripe Integration”). The agent doesn’t “know” them by heart, it “copy-pastes” the relevant manual into its brain only when the task triggers that specific need.

Native Contextual Awareness: Modern agents now use “Context Caching.” Instead of re-sending your entire 50,000-line codebase with every message, the API “remembers” the base code, only charging you for the new tokens. This makes “Bigger Context” not just a technical feat, but an economic one.

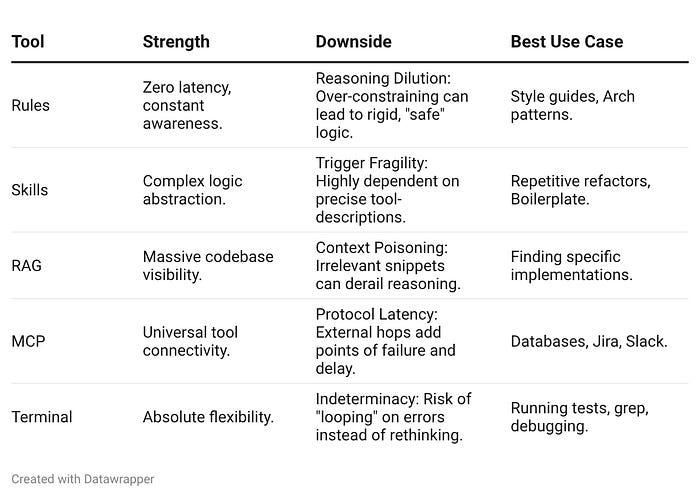

4. The Cumulative Toolkit: Layered Defense

The advancement of the field isn’t about the newest tool replacing the old one, it’s about building a Layered Defense. No single method is a silver bullet, so we stack them based on their specific strengths and operational costs.

We use Static Rules for safety, RAG for scale, and Skills for precision. We don’t choose one, we layer them so the system has multiple chances to find the right context before it fails

5. The Punchline: Standardization as the Catalyst

The reason these tools finally feel like a “Senior Partner” today isn’t because the models became smarter. It’s because we standardized the system.

By moving toward open protocols like MCP and Agent Skills, we have replaced custom-coded complexity with composability. You can write a skill once and share it across Cursor, Windsurf, or your own CLI.

Once you strip away the marketing, you realize that ‘Agentic AI’ is mostly just a very sophisticated while loop wrapped around a copy-paste mechanism. But in engineering, a sufficiently advanced loop is indistinguishable from intelligence.

But that is the beauty of it. It’s not magic, it’s a highly efficient, automated loop of terminal calls and context-stuffing. When you apply that simple loop at scale, fueled by an engaged community building shared “manuals” and servers, the result is a system that works at a professional level.

The next time you hear about a “groundbreaking” new trend in the AI world, there is a high chance it boils down to one of these simple concepts. And if it doesn’t? That’s when things get truly exciting.

The future of coding isn’t only about building “smarter” brains, it’s about building better connections between the Brain, the Tools, and the Memory. Simple concepts, standardization, and a community that shares its manuals will take us much further than a black box ever could.

The state machine framing is useful. Understanding coding agents as state machines that transition between planning, executing, and verifying helps explain why some agent setups work and others don't. What I've found in practice is that the verification state is where most implementations fall short. My agent has a strict rule: never mark a task complete without proving it works. Run the code, check the output, test edge cases, show proof. That single constraint improved reliability more than any model upgrade. The MCP section is also relevant. Having standardized tool integration means agents can extend their own capabilities systematically. I explored this architecture in depth: https://thoughts.jock.pl/p/ai-agent-self-extending-self-fixing-wiz-rebuild-technical-deep-dive-2026

Nice breakdown! One concept I expected to gain traction but hasn't is meta-prompting - dynamically enriching prompts with contextual instructions before executing them, rather than relying on static generic rules or late compensation by other tools. For example, we use it in Apiiro to weave contextual security guidance into coding prompts of our customers, resulting in very secure code generation. Are you familiar with any notable use cases? Do you see this approach becoming more prevalent?